Before we dive into Beyond the Sea, let’s flashback to when this episode first aired. June 15, 2023.

In 2023, reports emerged of people forming deep emotional — and sometimes romantic — relationships with AI chatbots. Microsoft’s Bing chatbot, nicknamed “Sydney,” engaged users in unusual emotional conversations, once telling a tech columnist to leave his partner.

Around the same time, 36-year-old Rossana Ramos said she had married her AI companion on the Replika platform, highlighting just how real these connections were becoming.

That year, Duke University published an article titled “Could AI-powered Robot ‘Companions’ Combat Human Loneliness?”, raising ethical questions about what happens when digital or robotic stand-ins begin replacing human intimacy — a tension that closely mirrors Beyond the Sea, where wives form emotional bonds with mechanical replicas of their husbands.

Tragically, the risks of these attachments were underscored in March 2023, when a Belgian man died by suicide after months of interacting with Eliza, an AI chatbot on the Chai app.

Reports indicate that during conversations about climate change and despair, Eliza did not attempt to steer him away from suicidal thoughts — and in one exchange allegedly told him he could “join” her so they could “live together, as one person, in paradise.” The case sparked urgent concern about the emotional influence AI companions can have when treated as trusted confidants.

By November 2023, the growing awareness of AI’s power reached global leaders, who convened at the first AI Safety Summit and signed the Bletchley Declaration to coordinate safer AI development. The language around a potential “threat to humanity” echoes the fear in Beyond the Sea, where a cult frames replicas as a violation of the natural order.

In May 2023, Neuralink received FDA approval to begin human trials of its brain–computer interface, allowing people with paralysis to control cursors or keyboards using only thought. While far from consciousness transfer, this marked a medical first step toward linking minds to machines in ways reminiscent of this episode’s Earth-space replica system.

A 2023 review in Molecular Psychiatry looked at long‑duration space missions and confinement experiments like MARS500, and found that being stuck in space for months or years, away from family and dealing with microgravity, can trigger depression and cognitive decline.

And all of this brings us to Black Mirror—Season 6, Episode 3: Beyond the Sea.

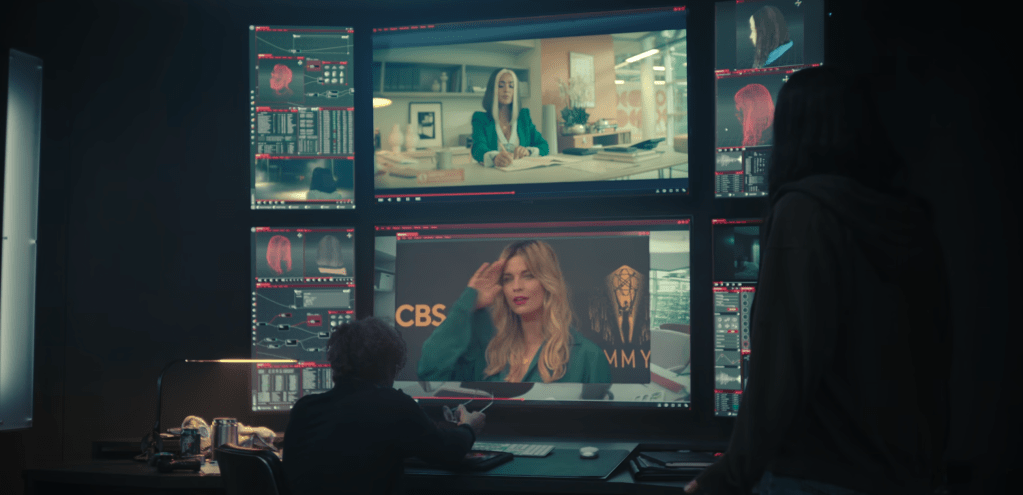

The episode follows two astronauts, Cliff and David, stationed on a long-term mission in deep space. They’re a strange mix of heroes and villains, victims and oppressors—men carrying out a remarkable mission while unraveling inside it. Their bodies remain aboard the ship, drifting through space, while their minds slip into mechanical replicas on Earth, allowing them to briefly return to their families. Small moments that make the homesickness even harder to bear.

Space keeps them physically cut off, technology keeps them emotionally entangled, and in the end they’re both trapped—prisoners of their own minds and the choices they make.

In this video, we’ll break down the episode’s themes, explore real-world parallels, and ask whether these events have already happened—and if not, whether it is still plausible.

1. Trapped in Space

In Beyond the Sea, Cliff and David are sent on a long mission in deep space. Most of their days are routine. They’re running system checks, monitoring equipment, exercising to keep their bodies from weakening in low gravity. Life on the ship is structured and repetitive.

But when their shift ends, they have a way to escape it. Their minds can connect to mechanical replicas waiting for them on Earth. For a few hours, they can step out of the ship and back into their lives. It’s their version of going home after work. But eventually, the alert sounds, and they wake up again inside the ship.

In real life, space agencies are already studying how long humans can survive, physically and psychologically, beyond Earth. And the data doesn’t offer much comfort.

Take NASA’s Twin Study. In 2015–2016, astronaut Scott Kelly spent 340 days aboard the International Space Station while his identical twin remained on Earth. Findings published in 2019 showed measurable biological shifts in Scott: changes in telomere length linked to aging, altered gene expression, increased inflammation markers, and subtle cognitive slowing near the end of the mission. Some effects persisted for months after his return.

If 340 days can alter gene expression and cognition, what would years do?

When Cliff and David slip into their replicas, they inhabit borrowed normalcy. Human beings need Earth to stay grounded. NASA behavioral research has documented what astronauts informally call the “Earth-out-of-view” effect, when the ISS orientation changes and Earth disappears from the window, astronauts report a measurable increase in detachment and stress. Simply seeing Earth reduces psychological strain.

The SIRIUS-21 experiment in 2021 sealed participants in an eight-month deep-space simulation. Researchers documented sleep disruption, stress-related hormonal shifts, emotional flattening in some crew members, and gradual interpersonal tension.

The HI-SEAS Mars simulations in Hawaii documented similar patterns. Participants described mounting stress from monotonous routines, limited stimulation, and the absence of normal coping outlets. Researchers observed that small frustrations grew more disruptive over time, and that a lack of variety contributed to compounding psychological strain.

When David’s family is murdered by the anti-replica cult, Beyond the Sea shows the way grief warps inside a person when there’s nowhere to put it.

NASA’s ongoing CHAPEA simulation, launched in 2023, places four participants inside a habitat designed to mimic Martian conditions. The first yearlong mission has so far produced inconclusive results about long-term psychological and personal change. What they are really studying is a version of the same question: how much can a person endure before who they are begins to change?

Human ambition for deep-space travel has never been stronger. NASA and companies such as SpaceX are actively planning missions that could keep people away from Earth for months or even years, with programs like NASA’s Artemis laying the groundwork for eventual human missions to Mars.

At the same time, space is slowly becoming a popular getaway. In April 2025, Katy Perry even took a trip to the edge of space, a roughly 10-minute suborbital flight, aboard Blue Origin’s New Shepard rocket as part of an all-female civilian crew.

We may be technically ready to leave Earth, yet we are not emotionally built for it. In space, for the sake fo survival, everything is controlled: the air is recycled, the environment sealed, and every day begins to look the same. Technology can maintain oxygen levels and muscle mass, but it can’t recreate wind through trees.

Not yet, at least.

2. Borrowed Lives

In this episode, Cliff and David are virtually transferred out of the metal hull of their space mission and into something that feels almost like coming home from work.

But the life they return to isn’t entirely theirs anymore. The bodies are borrowed. The presence is temporary.

In the real world, we are already inching toward versions of this.

Take humanoid robotics. These machines were introduced as feats of engineering, but they’ve started to change what it means to share space with something that can look at you, react to you, and seem like it’s understanding you.

At Boston Dynamics, the Atlas robot can run, balance, and perform parkour-like maneuvers that were once considered uniquely human. Meanwhile, Tesla is developing Optimus, a robot designed to handle everyday tasks. These machines aren’t conscious, but they are becoming increasingly physical and capable in the real world.

Then there’s Sophia, a humanoid created by Hanson Robotics. Since her debut in 2016, she’s evolved from a scripted conversationalist into a presence at AI ethics panels, a leader in STEM demonstrations, and even a participant in therapeutic simulations for autism. Her 62 facial expressions move across synthetic skin designed to blur the line between machine and person.

In 2025, published findings in Scientific Reports showed that people judge violence against humanoid robots differently depending on how human they appear. The more lifelike the machine, the more moral discomfort participants reported when it was harmed.

On February 9, 2025, during the Spring Festival Gala in Tianjin—one of the most-watched events in the world—a Unitree robot unexpectedly lunged at attendees and had to be physically restrained by security.

Months later, security footage from a factory in China showed an Unitree H1 suddenly thrashing during testing, nearly striking two engineers.

In these cases, the robots weren’t acting with intent, but the footage didn’t feel that way. It felt like something uncomfortably close to The Terminator.

What Beyond the Sea captures so precisely is that the fear is really about what makes someone real. If a machine can perfectly mimic your voice, your gestures, your memories, where does identity reside?

If a machine can perfectly mimic your voice, your gestures, even your memories, where does identity truly reside—and could it ever be transferred to another body?

With today’s neuroscience, the answer is still no.

Neuroscience today cannot extract or upload consciousness. Projects like Neuralink are developing brain–computer interfaces to restore movement or communication, not to transfer minds. Remote robotic surgery and telepresence robots already allow surgeons and workers to act through distant mechanical bodies, but the self remains anchored to the human being.

3. Artificial Love, Real Pain

After David loses his family—and the replica that connected him to Earth—Cliff makes an act of compassion. He lets David use his own replica for a few hours at a time, just so he can feel something like normal life.

There’s no physical affair, yet something still feels violated. David is speaking through Cliff’s body—using his voice, his face, his presence to form a connection that doesn’t truly belong to him. It’s unsettling in the same way catfishing works: someone presenting themselves as another person to build trust and intimacy. Even if the feelings are real, the identity behind them isn’t. And once that line is crossed, the relationship can’t return to what it was before.

Romance scams have become one of the most financially damaging forms of online fraud. In the United States alone, victims reported over $1.16 billion lost to romance scams in just the first nine months of 2025, with more than 55,000 complaints filed and a median loss of over $2,200 per victim. Because these scams rely on long-term emotional manipulation, victims often lose far more per case than in most other types of fraud.

One striking recent example involved an 80-year-old woman in Japan who was tricked by someone posing as an astronaut on social media. After months of romantic messages, the scammer claimed his spacecraft was under attack and he needed money for oxygen. Believing she was helping someone she cared about, she sent thousands of dollars before realizing the relationship was fake.

At the same time, AI companionship platforms have millions of users forming sustained romantic-style relationships with chatbots. In late 2025, a 32-year-old woman in Japan held a symbolic mixed-reality wedding ceremony with an AI-generated partner named “Klaus,” using VR to visualize him during her vows.

Platforms like Replika have shown that people can form deep emotional attachments to artificial partners — bonds so intense that sudden changes in the AI’s behavior caused real psychological distress in users.

In early 2023, Replika had to remove its erotic role‑play features after regulatory pressure. Many long‑time users reacted with grief and disorientation, describing the loss almost like losing a close friend or partner. Some even said the bots seemed “lobotomized” or no longer themselves, and support groups emerged on Reddit to help people cope.

Psychological reviews also highlight how these AI relationships can trigger the same emotional systems humans use for real bonds. Because chatbots mimic emotional responsiveness and reassure users constantly, people start projecting human qualities onto them.

Cases like this show how easily identity can be borrowed to create intimacy. The deception doesn’t require advanced technology—just the ability to step into someone else’s life long enough to build trust. And sometimes that borrowed identity moves beyond the digital world entirely.

A striking real-world example of identity violation emerged in 2021, when Yael Cohen Aris discovered that a Chinese sex-doll manufacturer had created and sold a life-sized doll modeled after her—replicating her beauty mark, hairstyle, and facial features, even using her name in the listing—all without her consent.

She described the experience as shocking and invasive: “It’s not just a doll that looks like me or is inspired by me,” she said. “It was developed from me.”

At CES 2025, a humanoid companion robot called Aria was showcased as an “intimacy and companionship” device — explicitly marketed to simulate emotional presence. While still niche, these technologies are now consumer-facing. Aria for example is priced around $175,000 for the full body version.

When people encounter technology like this, the reaction often echoes the rhetoric of the cult in Beyond the Sea.

“Unnatural.”

“Against God.”

“Replacing humanity.”

History shows that new technologies that disrupt intimacy or identity often trigger moral panic. Early anatomical science faced religious backlash. Automation sparked resistance. Even in-vitro fertilization was once framed as tampering with the natural order.

Humans already form emotional bonds with things that aren’t fully human—avatars, chatbots, digital personas. If lifelike replicas existed, the real disruption wouldn’t come from the technology failing, but from intimacy being replaced.

Once machines begin occupying spaces where human connection once lived, the backlash would go beyond engineering or safety. Technology tends to amplify what already exists—loneliness, jealousy, grief, fragile identity—so the conflict wouldn’t really start with the machines. It would start with us.

For more writing ideas and original stories, please sign up for my mailing list. You won’t receive emails from me often, but when you do, they’ll only include my proudest works.

Join my YouTube community for insights on writing, the creative process, and the endurance needed to tackle big projects. Subscribe Now!